Ready to get started?

No matter where you are on your CMS journey, we're here to help. Want more info or to see Glide Publishing Platform in action? We got you.

Book a demoAs a small civil war breaks out at the AP over LLM usage, it shows how AI content is forcing organisations once known for quality to make some hard decisions.

Everything you read is true, until it's about something of which you actually have knowledge.

This mantra has stuck with me for many years, and acts as a form of brake on my own urge to jump to any rapid conclusions from any information I have digested - not always successfully it has to be said.

When it comes to LLMs, the most meaningful curation layer still sits at the level of training data. Among all the other ways in which the AI industry is extracting skilled value for free, without returning any of that wealth to the skilled, is precisely at the level of curation. The stochastic parrot must have the highest quality bird feed to dine on and we as writers and journalists and editors provide that food, having sorted the husks from the seed already.

Thinking about this vital curation layer, which must exist for raw information to be made useful, a report concerning some agonising at the Associated Press recently over the use of AI systems is illustrative.

Responding to concerns from AP journalists over the use of AI to assemble stories, a senior AI product manager told staff, via a company Slack channel, that: “There are many — and I mean MANY — editors who would prefer an AI-written article to a human-written one. Reporting and writing are two different skill sets and rare - RARE - is the occasion when it’s wrapped into one person."

Well that is true. Anyone who has been an editor in charge of a reporting staff knows it. Some can write, some can report, and there is every shade of ability between those two poles, including a reporter doing an unusually good job because the subject is something they actually care about.

My input

I've edited a lot of AP stories in my time. The reason for this is because I worked for a British publication, and AP - who we used among other wire services - wrote in an American style, that often meant their best stories would have at least four establishing paragraphs of colour writing before you could reach what the actual story was.

It was a writerly style, and one anathema to the pacier British hard news style in which the salient details are expressed at the very top of the story. The AP loved a drop intro, and on many occasions so could I - I can still make an argument for that style of news writing, even if it wasn't how I was trained.

Whatever its place, I always thought that, amongst the conflicting market preferences on display, it at least showed a love of writing itself, and - placed as a core culture of AP alongside accuracy and relevance - was strongly tied to the organisation's value to its customers. Consequently, it's not difficult to see why the product manager's Slack post has caused a little consternation.

Good reporting is more than just getting quotes, although quotes form the veracity by which good news reporting provides its true value. A good reporter on the spot doesn't just get quotes, they use the full sensory suite available to Homo Sapiens. And it's quite the sensory package.

So if your stories consist of a workflow largely involved of just quotes being gathered, fed into a LLM with the appropriate prompts - not an exact science, right? - and then the content package this system spits out is being passed to an editor for polishing (or more likely complete copy demolition and rebuild), I think you are reducing the output value of your reporters, and it's predictable they won't be happy about it.

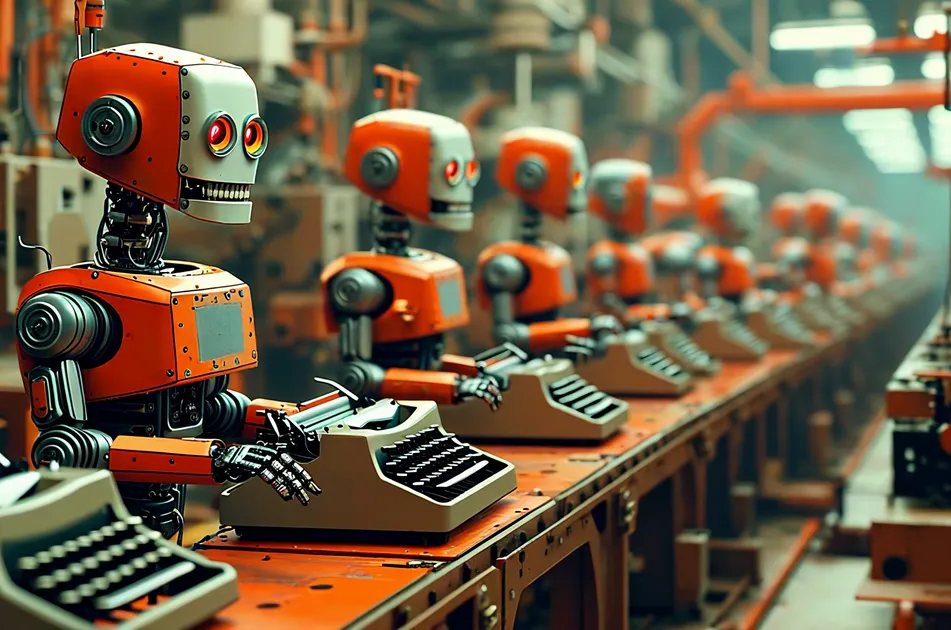

If you treat people like they're stupid, they will be stupid. Bad reporter = bad story. If you erode one of the unique propositions you project to clients, you risk, I think, becoming just another content sausage factory.

Output can't always be measured

It's interesting to place this risk against the burgeoning sole creator market in journalism, including the wealth of journalistic effort that now lives on YouTube channels, or on Substack, or any such platform where people can directly address themselves to an audience. This is a thriving area, sometimes for better, sometimes for worse, yet it thrives through its very individuality and its personal curation.

Clearly, if this is something audiences desire, it's hard to see how a watered down version of the AP voice, blandified by an LLM, could make sense, and I assume this will be at the root what AP's reporters and product managers will most want to avoid. In the era of authenticity, it's what the public thinks that matters, and if the output is notable only for being something an AI could produce from basic notes, then there's not much preventing someone else doing that either.

Obviously, AP have struggled financially in recent years, striking deals with AI outfits as part of their survival strategy, yet I'm minded of a recent quote from Robert Thomson, CEO of News Corp, who characterised his business as "essentially an input company". That is to say, in the age of LLMs, "we’re an input in the way that semiconductors are an input, in the way data centres are an input, in the way that energy is an input".

I'm not entirely convinced by this, because of the way news organisations still help stitch the fabric of the societies they serve - again, for better or worse. It's not as simple as inputs.

That said, there can be no value in making one's journalistic "input" less distinctive, and that seems a possible outcome as the AP faces the future.

No matter where you are on your CMS journey, we're here to help. Want more info or to see Glide Publishing Platform in action? We got you.

Book a demo